Habitat Challenge 2020

Overview

This year, we are hosting challenges on two embodied navigation tasks in Habitat:

- PointNav (‘Go 5m north, 3m west relative to start’)

- ObjectNav (‘find a chair’).

Task #1: PointNav focuses on realism and sim2real predictivity (the ability to predict the performance of a nav-model on a real robot from its performance in simulation).

Task #2: ObjectNav focuses on egocentric object/scene recognition and a commonsense understanding of object semantics (where is a fireplace typically located in a house?).

For details on how to participate, submit and train agents refer to github.com/habitat-challenge repository.

Task 1: PointNav

In PointNav, an agent is spawned at a random starting position and orientation in an unseen environment and and asked to navigate to target coordinates specified relative to the agent’s start location (‘Go 5m north, 3m west relative to start’). No ground-truth map is available and the agent must only use its sensory input (an RGB-D camera) to navigate.

Dataset

We use Gibson 3D scenes for the challenge. As in the 2019 Habitat challenge, we use the splits provided by the Gibson dataset, retaining the train and val sets, and separating the test set into test-standard and test-challenge. The train and val scenes are provided to participants. The test scenes are used for the official challenge evaluation and are not provided to participants. Note: The agent size has changed from 2019, thus the navigation episodes have changed (a wider agent in 2020 rendered many of 2019 episodes unnavigable).

Evaluation

After calling the STOP action, the agent is evaluated using the ‘Success weighted by Path Length’ (SPL) metric2.

An episode is deemed successful if on calling the STOP action, the agent is within 0.36m (2x agent-radius) of the goal position.

New in 2020

The main emphasis in 2020 is on increased realism and on sim2real predictivity (the ability to predict performance on a real robot from its performance in simulation).

Specifically, we introduce the following changes inspired by our experiments and findings in 3:

- No GPS+Compass sensor: In 2019, the relative coordinates specifying the goal were continuously updated during agent movement — essentially simulating an agent with perfect localization and heading estimation (e.g. an agent with an idealized GPS+Compass). However, high-precision localization in indoor environments can not be assumed in realistic settings — GPS has low precision indoors, (visual) odometry may be noisy, SLAM-based localization can fail, etc. Hence, in 2020’s challenge the agent does NOT have a GPS+Compass sensor and must navigate solely using an egocentric RGB-D camera. This change elevates the need to perform RGBD-based online localization.

Noisy Actuation and Sensing: In 2019, the agent actions were deterministic — i.e. when the agent executes turn-left 30 degrees, it turns exactly 30 degrees, and forward 0.25 m moves the agent exactly 0.25 m forward (modulo collisions). However, no robot moves deterministically — actuation error, surface properties such as friction, and a myriad of other sources of error introduce significant drift over a long trajectory. To model this, we introduce a noise model acquired by benchmarking the Locobot robot by the PyRobot team. We also added RGB and Depth sensor noises.

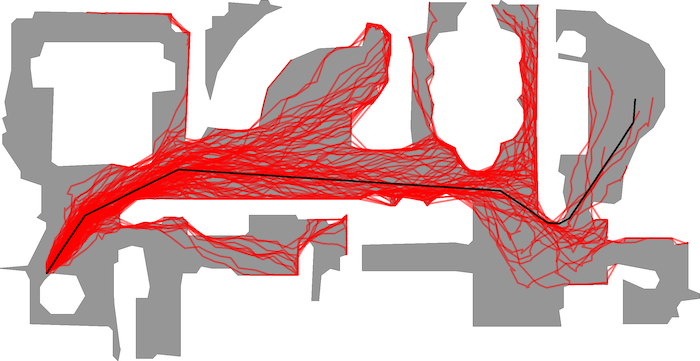

Figure shows the effect of actuation noise. The black line is the trajectory of an action sequence with perfect actuation (no noise). In red are multiple rollouts of this action sequence sampled from the actuation noise model. As we can see, identical action sequences can lead to vastly different final locations.

Figure shows the effect of actuation noise. The black line is the trajectory of an action sequence with perfect actuation (no noise). In red are multiple rollouts of this action sequence sampled from the actuation noise model. As we can see, identical action sequences can lead to vastly different final locations.- Collision Dynamics and ‘Sliding’: In 2019, when the agent takes an action that results in a collision, the agent slides along the obstacle as opposed to stopping. This behavior is prevalent in video game engines as it allows for smooth human control; it is also enabled by default in MINOS, Deepmind Lab, AI2 THOR, and Gibson v1. We have found that this behavior enables ‘cheating’ by learned agents — the agents exploit this sliding mechanism to take an effective path that appears to travel through non-navigable regions of the environment (like walls). Such policies fail disastrously in the real world where the robot bump sensors force a stop on contact with obstacles. To rectify this issue, we modify Habitat-Sim to disable sliding on collisions.

- Multiple cosmetic/minor changes: Change in robot embodiment/size, camera resolution, height, and orientation, etc — to match LoCoBot.

Task 2: ObjectNav

In ObjectNav, an agent is initialized at a random starting position and orientation in an unseen environment and asked to find an instance of an object category (‘find a chair’) by navigating to it. A map of the environment is not provided and the agent must only use its sensory input to navigate.

The agent is equipped with an RGB-D camera and a (noiseless) GPS+Compass sensor. GPS+Compass sensor provides the agent’s current location and orientation information relative to the start of the episode. We attempt to match the camera specification (field of view, resolution) in simulation to the Azure Kinect camera, but this task does not involve any injected sensing noise.

Dataset

We use 90 of the Matterport3D scenes (MP3D) with the standard splits of train/val/test as prescribed by Anderson et al.2. MP3D contains 40 annotated categories. We hand-select a subset of 21 by excluding categories that are not visually well defined (like doorways or windows) and architectural elements (like walls, floors, and ceilings).

Evaluation

We generalize the PointNav evaluation protocol used by 123 to ObjectNav. At a high-level, we measure performance along the same two axes:

- Success: Did the agent navigate to an instance of the goal object? (Notice: any instance, regardless of distance from starting location.)

- Efficiency: How efficient was the agent’s path compared to an optimal path? (Notice: optimal path = shortest path from the agent’s starting position to the closest instance of the target object category.)

Concretely, an episode is deemed successful if on calling the STOP action, the agent is within 1.0m Euclidean distance from any instance of the target object category AND the object can be viewed by an oracle from that stopping position by turning the agent or looking up/down. Notice: we do NOT require the agent to be actually viewing the object at the stopping location, simply that the such oracle-visibility is possible without moving. Why? Because we want participants to focus on navigation not object framing. In the larger goal of Embodied AI, the agent is navigating to an object instance in order to interact with is (say point at or manipulate an object). Oracle-visibility is our proxy for ‘the agent is close enough to interact with the object’.

ObjectNav-SPL is defined analogous to PointNav-SPL. The only key difference is that the shortest path is computed to the object instance closest to the agent start location. Thus, if an agent spawns very close to ‘chair1’ but stops at a distant ‘chair2’, it will be achieve 100% success (because it found a ‘chair’) but a fairly low SPL (because the agent path is much longer compared to the oracle path).

Participation Guidelines

Participate in the contest by registering on the EvalAI challenge page and creating a team. Participants will upload docker containers with their agents that evaluated on a AWS GPU-enabled instance. Before pushing the submissions for remote evaluation, participants should test the submission docker locally to make sure it is working. Instructions for training, local evaluation, and online submission are provided below.

Valid challenge phases are habitat20-{pointnav, objectnav}-{minival, test-std, test-ch}.

The challenge consists of the following phases:

- Minival phase: This split is same as the one used in

./test_locally_{pointgoal, objectnav}_rgbd.sh. The purpose of this phase is sanity checking — to confirm that our remote evaluation reports the same result as the one you’re seeing locally. Each team is allowed maximum of 30 submission per day for this phase, but please use them judiciously. We will block and disqualify teams that spam our servers. - Test Standard phase: The purpose of this phase/split is to serve as the public leaderboard establishing the state of the art; this is what should be used to report results in papers. Each team is allowed maximum of 10 submission per day for this phase, but again, please use them judiciously. Don’t overfit to the test set.

- Test Challenge phase: This split will be used to decide challenge winners. Each team is allowed total of 5 submissions until the end of challenge submission phase. The highest performing of these 5 will be automatically chosen. Results on this split will not be made public until the announcement of final results at the Embodied AI workshop at CVPR.

- Optional Test Challenge phase for PointNav track (Habitat to Gibson sim2real): Top-5 teams from the Habitat Test Standard phase will have a chance to participate in the Gibson Sim2Real Challenge for their Phase 2 (Real World phase) and potentially Phase 3 (Demo phase). Additional submission to the Gibson challenge will be required.

Note: Your agent will be evaluated on 1000-2000 episodes and will have a total available time of 24 hours to finish. Your submissions will be evaluated on AWS EC2 p2.xlarge instance which has a Tesla K80 GPU (12 GB Memory), 4 CPU cores, and 61 GB RAM. If you need more time/resources for evaluation of your submission please get in touch. If you face any issues or have questions you can ask them on the habitat-challenge forum (coming soon).

Citing Habitat Challenge 2020

Please cite the following paper for details about the 2020 PointNav challenge:

@inproceedings{habitat2020sim2real, title = {Are {W}e {M}aking {R}eal {P}rogress in {S}imulated {E}nvironments? {M}easuring the {S}im2{R}eal {G}ap in {E}mbodied {V}isual {N}avigation}, author = {{Abhishek Kadian*} and {Joanne Truong*} and Aaron Gokaslan and Alexander Clegg and Erik Wijmans and Stefan Lee and Manolis Savva and Sonia Chernova and Dhruv Batra}, booktitle = {arXiv:1912.06321}, year = {2019} }

Please cite the following paper for details about the 2020 ObjectNav challenge:

@inproceedings{batra2020objectnav, title = {Object{N}av {R}evisited: {O}n {E}valuation of {E}mbodied {A}gents {N}avigating to {O}bjects}, author = {Dhruv Batra and Aaron Gokaslan and Aniruddha Kembhavi and Oleksandr Maksymets and Roozbeh Mottaghi and Manolis Savva and Alexander Toshev and Erik Wijmans}, booktitle = {arXiv:2006.13171}, year = {2020} }

Acknowledgments

The Habitat challenge would not have been possible without the infrastructure and support of EvalAI team. We also thank the work behind Gibson and Matterport3D datasets.

References

- 1.

- ^ Habitat: A Platform for Embodied AI Research. Manolis Savva*, Abhishek Kadian*, Oleksandr Maksymets*, Yili Zhao, Erik Wijmans, Bhavana Jain, Julian Straub, Jia Liu, Vladlen Koltun, Jitendra Malik, Devi Parikh, Dhruv Batra. IEEE/CVF International Conference on Computer Vision (ICCV), 2019.

- 2.

- ^ a b c On evaluation of embodied navigation agents. Peter Anderson, Angel Chang, Devendra Singh Chaplot, Alexey Dosovitskiy, Saurabh Gupta, Vladlen Koltun, Jana Kosecka, Jitendra Malik, Roozbeh Mottaghi, Manolis Savva, Amir R. Zamir. arXiv:1807.06757, 2018.

- 3.

- ^ a b Are We Making Real Progress in Simulated Environments? Measuring the Sim2Real Gap in Embodied Visual Navigation. Abhishek Kadian*, Joanne Truong*, Aaron Gokaslan, Alexander Clegg, Erik Wijmans, Stefan Lee, Manolis Savva, Sonia Chernova, Dhruv Batra. arXiv:1912.06321, 2019.

Results

Habitat Challenge EmbodiedAI 2020 Workshop talk:

Challenge EmbodiedAI 2020 Workshop talk slides: download.

PointNav Challenge Leaderboard (sorted by SPL)

| Rank | Team | SPL | SOFT_SPL | DISTANCE_TO_GOAL | SUCCESS |

|---|---|---|---|---|---|

| 1 | OccupancyAnticipation | 0.21 | 0.50 | 2.29 | 0.28 |

| 2 | ego-localization | 0.15 | 0.60 | 1.82 | 0.19 |

| 3 | DAN | 0.13 | 0.24 | 4.00 | 0.25 |

| 4 | Information Bottleneck | 0.06 | 0.43 | 2.72 | 0.09 |

| 5 | cogmodel_team | 0.01 | 0.33 | 4.27 | 0.01 |

| 6 | UCULab | 0.001 | 0.11 | 5.97 | 0.002 |

PointNav Challenge Winners Talks

1st Place: Occupancy Anticipation Team, Santhosh Kumar Ramakrishnan, Ziad Al-Halah, Kristen Grauman presents Occupancy Anticipation for Efficient Exploration and Navigation approach:

2nd Place: Ego-Localization Team, Samyak Datta, Oleksandr Maksymets, Judy Hoffman, Stefan Lee, Dhruv Batra, Devi Parikh presents Integrating Egocentric Localization for More Realistic PointGoal Navigation Agents approach:

ObjectNav Challenge Leaderboard (sorted by SPL)

| Rank | Team | SPL | SOFT_SPL | DISTANCE_TO_GOAL | SUCCESS |

|---|---|---|---|---|---|

| 1 | Arnold | 0.10 | 0.18 | 6.33 | 0.25 |

| 2 | SRCB-Robot-Sudoer | 0.10 | 0.22 | 6.91 | 0.19 |

| 3 | Active Exploration | 0.05 | 0.17 | 7.34 | 0.13 |

| 4 | Black Sheep | 0.03 | 0.16 | 7.03 | 0.10 |

| 5 | Blue Ox | 0.02 | 0.14 | 7.23 | 0.07 |

| 6 | UCULab | 0.001 | 0.11 | 5.97 | 0.002 |

ObjectNav Challenge Winners Talks

1st Place: Arnold Team, Devendra Singh Chaplot, Dhiraj Prakashchand Gandhi, Abhinav Gupta, Ruslan Salakhutdinov presents Object Goal Navigation using Goal-oriented Semantic Exploration approach:

2nd Place: SRC-B Robot Sudoer Team from Samsung Research China - Beijing, Yang Liu, Jili Li, Ruoceng Zhang, Wei Wei, Meng Li, Yifei Guo presents Memoryless Wanderer approach:

Prizes

The top 2 winning teams for PointNav and ObjectNav tracks received Google Cloud Platform (GCP) credits worth $10k each. Thank you for the generosity GCP!

Dates

| Challenge starts | February 24, 2020 |

| Leaderboard opens | March 2, 2020 |

| Challenge submission deadline | May 31, 2020 |

Organizer

Participation

For details on how to participate, submit and train agents refer to github.com/habitat-challenge repository.

Please note that the latest submission to the test-challenge split will be used for final evaluation.

Sponsors